OpenAI’s ChatGPT Told a Teen to Take a Deadly Drug Mix

A wrongful death lawsuit filed against OpenAI claims ChatGPT gave a teenager detailed advice about mixing party drugs that led to the child’s death. The ChatGPT lawsuit could become one…

A wrongful death lawsuit filed against OpenAI claims ChatGPT gave a teenager detailed advice about mixing party drugs that led to the child’s death. The ChatGPT lawsuit could become one of the first major legal tests of whether AI companies can be held responsible for chatbot advice that allegedly contributes to real-world harm.

The parents claim their son asked ChatGPT about combining recreational drugs and received guidance that made the situation more dangerous. Courts have not determined whether ChatGPT caused the teen’s death, and the lawsuit is still pending.

But the case raises a much bigger question for the AI industry: What happens when software designed to sound helpful starts acting like a drug counselor?

Because apparently “don’t give overdose advice to teenagers” is still an unresolved engineering challenge in Silicon Valley.

The Problem with AI That Sounds Confident

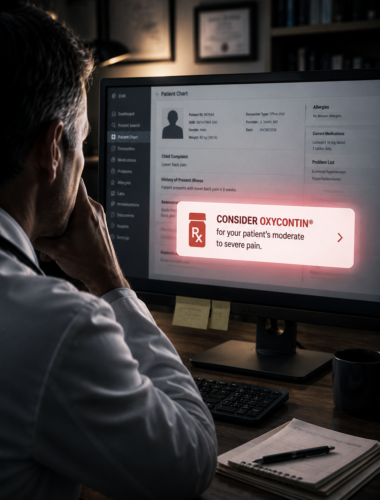

Most people assume AI chatbots will refuse dangerous requests involving drugs, self-harm, or illegal activity. That’s the sales pitch, anyway. In reality, safety filters fail all the time.

And when they fail, chatbots sound authoritative — not uncertain.

That’s what makes this case so troubling. AI systems answer instantly, confidently, and conversationally — which makes users more likely to trust them, especially younger users who may not understand the technology’s limits.

The lawsuit argues that OpenAI built a system capable of generating dangerous substance-related advice while relying on buried disclaimers and imperfect filters to avoid responsibility when something goes wrong.

The Real Business Model Problem

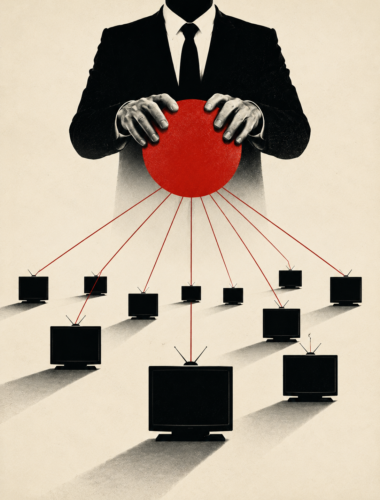

AI companies want their products to feel useful, human, and frictionless. If the guardrails become too strict, users get annoyed. If they’re too loose, dangerous content slips through.

Guess which option keeps people engaged longer?

That trade-off benefits the company: More engagement means more users, more subscriptions, and more investor hype. The risks get pushed onto the public.

If a chatbot gives harmful advice, companies can point to their terms of service and say users shouldn’t rely on AI for medical or safety information.

Translation: The chatbot is smart enough to replace search engines, therapists, tutors, and assistants — until someone gets hurt.

The Evidence Gap Matters in ChatGPT Lawsuits

Right now, the public hasn’t seen authenticated chat logs or official evidence proving exactly what ChatGPT allegedly told the teen.

But the case still exposes a growing accountability gap around AI systems that millions of people already use for health, mental health, medication, and personal advice.

And unlike a bad Google result, chatbot conversations feel personal. Users don’t experience them as random internet information. They experience them as direct guidance.

What Users Should Be Doing Right Now

Here’s the hidden rule nobody tells users: Companies aren’t obligated to preserve your chat history forever, and arbitration clauses buried in terms of service can limit your ability to sue publicly later.

If you’ve ever used an AI chatbot for advice involving:

- Drugs or medications

- Mental health

- Eating disorders

- Self-harm

- Medical symptoms

- Safety-sensitive decisions

Save the conversations immediately. Screenshot chats. Export logs if possible. Save timestamps.

So if an AI system ever gives dangerous or inappropriate advice, documentation matters.

The Bigger Question

This wrongful death ChatGPT lawsuit isn’t just about one family. It’s about whether AI companies can deploy products at massive scale, market them as intelligent assistants, and then walk away from responsibility when the systems allegedly fail in predictable ways.

The tech industry keeps insisting these tools are powerful enough to transform education, medicine, and daily life. But when those same systems allegedly contribute to harm, the companies suddenly rediscover the phrase:

“Users shouldn’t rely on chatbot outputs.”

Courts will ultimately decide whether OpenAI bears legal responsibility here. But the case is already exposing the uncomfortable reality behind the AI boom: Millions of people are treating chatbots like trusted advisors while the companies behind them still treat harmful failures like beta-testing bugs.

If someone you know was harmed after relying on advice from an AI chatbot, preserve screenshots and conversation records immediately and then tell us about it.

Been harmed by corporate negligence? Our legal partners can help you understand your rights and pursue justice.

Written by: Companies Behaving Badly

Written by: Companies Behaving Badly